Source: Efficiency with Algorithms, Performance with Data Structures - Chandler Carruth

- Niklaus Wirth – Algorithms + Data Structures = Programs

There are two sides to the performance coin:

Efficiency through Algorithms – How much work is required by a task.

- Improving efficiency involves doing less work.

- An efficient program is one that does the minimum (that we’re aware of) amount of work to accomplish a given task.

Performance through Data Structures – How quickly a program does it work.

- Improving performance involves doing work faster

- But there is no such thing as a “performant”, not even work in the English language.

- There is essentially no point at which a program cannot do work any faster… until you hit Bremermann’s limit…

- What does it mean to improve the performance of software?

- The software is going to run on a specific, real machine

- There is some theoretical limit on how quickly it can do work

- Try to light up all the transistors, get the most work done as fast as possible.

All Comes back to Watts

- Every circuit not used on a processor is wasting power

- Don’t reduce this to the absurd — it clearly doesn’t make sense to use more parts of the CPU without improving performance!

Algorithms

- Complexity theory and analysis

- Common across higher-level languages, etc.

- Very well understood by most (I hope)

- Improving algorithmic efficiency requires finding a different way of solving the problem.

- Algorithmic complexity is a common mathematical concept that spans languages and processors. Don’t get lazy, you have to pay attention to your algorithms.

- Example: – “it’s about doing less work”, analyze the current approach and find ways to do it more efficiency

- Initially, you might have a basic O(n^2) algorithm

- Next, we have a Knuth-Morris-Pratt (a table to skip)

- Finally, we have a Boyer-Moore (use the end of the needle)

Do Less Work by Not Wasting Effort, Example 1

std::vector<X> f(int n) {

std::vector<X> result;

for(int i = 0; i < n; ++i)

result.push_back(X(...));

return result;

}

Initialize the collection size

std::vector<X> f(int n) {

std::vector<X> result;

result.reserve(n);

for(int i = 0; i < n; ++i)

result.push_back(X(...));

return result;

}

Do Less Work by Not Wasting Effort, Example 2

X *getX(std::string key,

std::unordered_map<std::string, std::unique_ptr<X>> &cache) {

if(cache[key])

return cache[key].get();

cache[key] = std::make_unique<X>(...);

return cache[key].get();

}

Retain the reference to the cache entry

X *getX(std::string key,

std::unordered_map<std::string, std::unique_ptr<X>> &cache) {

std::unique_ptr<X> &entry = cache[key];

if(entry)

return entry.get();

entry = std::make_unique<X>(...);

return entry.get();

}

Always do less work!

Design API’s to Help

Performance and Data Structures = “Discontiguous Data Structures are the root of all (performance) evil”

- Just say “no” to linked lists

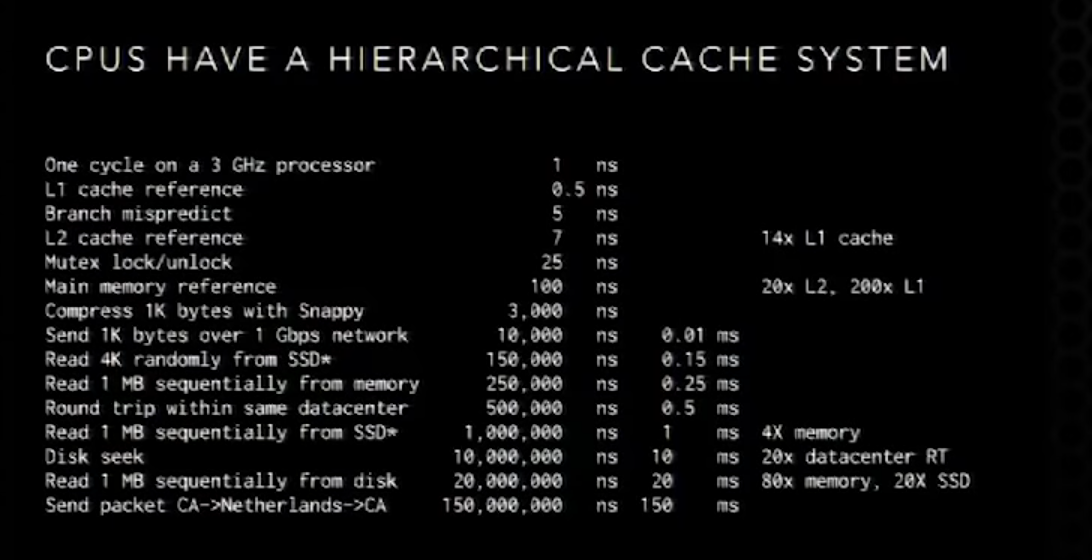

CPUs Have Hierarchical Cache System

Data Structures and Algorithms

- They’re tightly coupled, see Wirth’s books

- You have to keep both factors in mind to balance between them

- Algorithms can also influence the data access pattern regardless of the data structure used.

- Worse is better: bubble sort and cuckoo hashing